Product Prioritization that Works

One of the most important skills a product person should have is being able to execute with ruthless prioritization. And it makes total sense. Most teams operate in competitive spaces and they have finite resources, so taking the right decisions when it comes to what the team will build next is a skill that can make or break a startup.

How do teams make sure they always work on the most important thing at any given moment? Most certainly, that depends on where the team is on their journey from relying on the product understanding of the founding team and early hires to a formal process that empowers the different product teams to make decisions.

Usually, many startups begin with the product-focused founder calling the shots when it comes to what gets shipped next. This is aligned with his/her vision about the product, the understanding about the market, feedback from the first customers, reaching product market fit, etc. Obviously, that is a process which is hard to scale as the company starts having several product teams, each with its own vision, goals, roadmap and KPIs.

The logical next step is for the product team to become part of the decision making process. For many teams, this usually entails a transition to a more diligent product prioritization approach, which can be replicated across different teams if applicable. At the core of this process is an understanding of the vision, goals and targets for each product, which is the foundation that will inform product prioritization decisions.

So given a team has its vision, goals and objectives for the next months, how can they “productize” their decision making process around prioritization? Not all prioritization decisions will and should always be perfectly explained on paper. Nevertheless, being able as a product person to approach some of the most basic pillars that drive these decisions such as the value of a feature for the company and effort for the team in a data-driven and easy to communicate manner is necessary to some extent.

First of all, prioritization efforts start with creating a central repository with product ideas. That is a living document that can be shared with the rest of the team to increase visibility and transparency. Sometimes, it might also make sense to group requests in themes (e.g. conversion optimization, revenues, improve UX, SEO, etc).

As far as prioritizing feature ideas is concerned, there are many frameworks out there, some of them more data-driven than others. All in all, frameworks are just frameworks, and should be used to inform people and be adapted as they see fit. If a team wants to keep the process lean and easy-to-standardize, then weighted and unweighted scoring prioritization might be the best options. Both of them start with choosing the criteria teams will evaluate each feature by. These values usually fall under two dimensions: “How important it is” and “How difficult it is to build”.

Unweighted scoring

Value vs. Effort - This is probably the most basic, yet a great starting point. It measures the value compared to the effort of each feature using a scoring scale e.g. 1-10. The end score for every feature will be calculated using the formula score = value/effort. After scoring all features in your list, you can also visualize them in a Value vs. Effort Quadrant, which includes: Big Bets (high value, high effort), Quick Wins (high value, low effort), Maybes (low value, low effort), Time Sinks (low value, high effort). Permutations of the above method using value vs. risk or value vs. complexity also exist.

RICE - Another popular scoring method is RICE. This system (introduced by Intercom) measures each feature against four factors: reach, impact, confidence and effort. Each factor refers to the following:

Reach: how many people will this feature influence within a specific time period

Impact: how much will this feature impact users?

Confidence: how confident we are about the impact and reach scores?

Effort: how much time will this feature implementation require from our teams?

The following formula is used: RICE = Reach * Impact * Confidence / Effort. After running each feature by this calculation, a final RICE score is reached that can be used to rank in the prioritized order.

ICE - A more simplistic version of RICE is ICE. The factors calculated here are:

Impact: how impactful do we expect this feature to be?

Confidence: how confident we are that this feature will deliver the desired results

Ease: how easy is this initiative to build and implement?

Each of these factors is often scored from 1–10 with 10 being the highest, and the total average number is the ICE score. I have seen examples where the end score is calculated by multiplying the factors. The exact formula is not that important as long as the same one is followed across teams in the organizations.

Weighted scoring

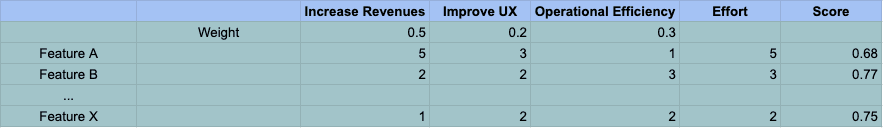

Weighted scoring is a prioritization method that includes the steps of the unweighted scoring methods, but with an added weight in each factor with the aim to make the stakeholders choose the relative importance of all criteria. Hence, teams will decide which are the important factors in terms of benefit and cost, what is the weight of each factor (out of a total of 100%), and how the end score is calculated. Different teams use different factors that make sense for them e.g. Increase Revenues, Improve User Experience, Reduce cost, Buyers Satisfaction, and different formulas. I usually prefer to introduce Effort as the divisor.

More methodologies

There are other techniques used by teams that don’t fall under the above categories such as the Kano model and the MoSCoW method.

The Kano model is a great guide to help teams prioritize product features based on the customer’s perception of value. Features break into four categories:

Dissatisfiers: Unless these are in place, customers won’t even consider the product as a solution to their problem.

Satisfiers: The more teams invest in these, the higher the level of customer satisfaction will be.

Delighters: These features are pleasant surprises, which the customers don’t expect, but that once provided, create a delighted response.

Indifferent factors: This refers to attributes that are perceived as neither good nor bad, hence they will not produce any sensation to customers. They can be removed, saving costs, without decreasing sales.

On the other hand, the MoSCoW method categorizes features in the following buckets:

Must have (Mo)

Should have (S)

Could have (Co)

Won’t have (W)

The MoSCoW method is useful especially when teams need to communicate what requirements are to be included (or excluded) inside a particular release, so actually zooming in on a specific feature.

Even though all scores in the unweighted and weighted scoring are just estimates, I have found both guiding frameworks useful when communicating prioritization decisions, as a score is attached next to each feature idea. That score could lead to a ranked list of features, which of course might not be in the exact sequence that the team will implement. This is not a roadmap, nor a release plan, but rather a prioritized list of feature candidates. That is something that neither Kano nor MoSCoW support.

Overall, the exact product prioritization process followed is very team-dependent. In the early days of a startup, prioritization decisions might not need to be documented and describe the rationale behind each decision explicitly. Nevertheless, as organizations scale, introducing such processes usually adds a lot of value. Apart from having the different product teams following a similar process when it comes to decision-making, the goal of this process is to have everyone think deeply about the value and effort of a feature candidate and how it contributes to the larger goal, while being able to align and properly communicate decisions to the rest of the team and stakeholders.

Please do reach out to exchange ideas and share more at alex@marathon.vc or Twitter.